Selected Publications

-

M^2PT: Multimodal Prompt Tuning for Zero-shot Instruction Learning. T. Wang, Y. Liu, J. C. Liang, J. Zhao, Y. Cui, Y. Mao, S. Nie, J. Liu, F. Feng, Z. Xu, C. Han, L. Huang, Q. Wang, D. Liu. The Conference on Empirical Methods in Natural Language Processing (EMNLP), 2024. [pdf]

-

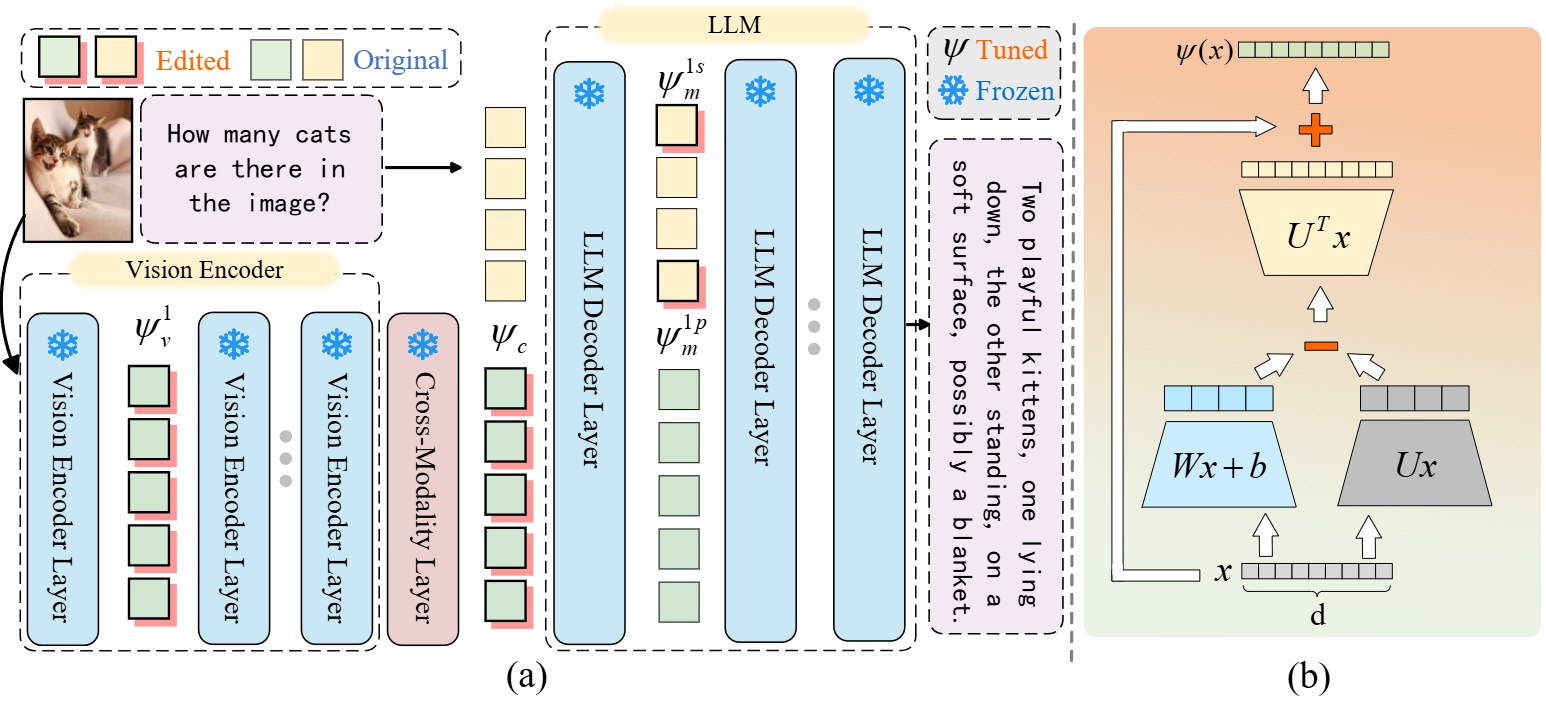

Re-Imagining Multimodal Instruction Tuning: A Representation View. Y. Liu, J. C. Liang, R. Tang, Y. Lee, M. Rabbani, S. Dianat, R. Rao, L. Huang, D. Liu, Q. Wang, C. Han†. The International Conference on Learning Representations (ICLR), 2025. [pdf]

-

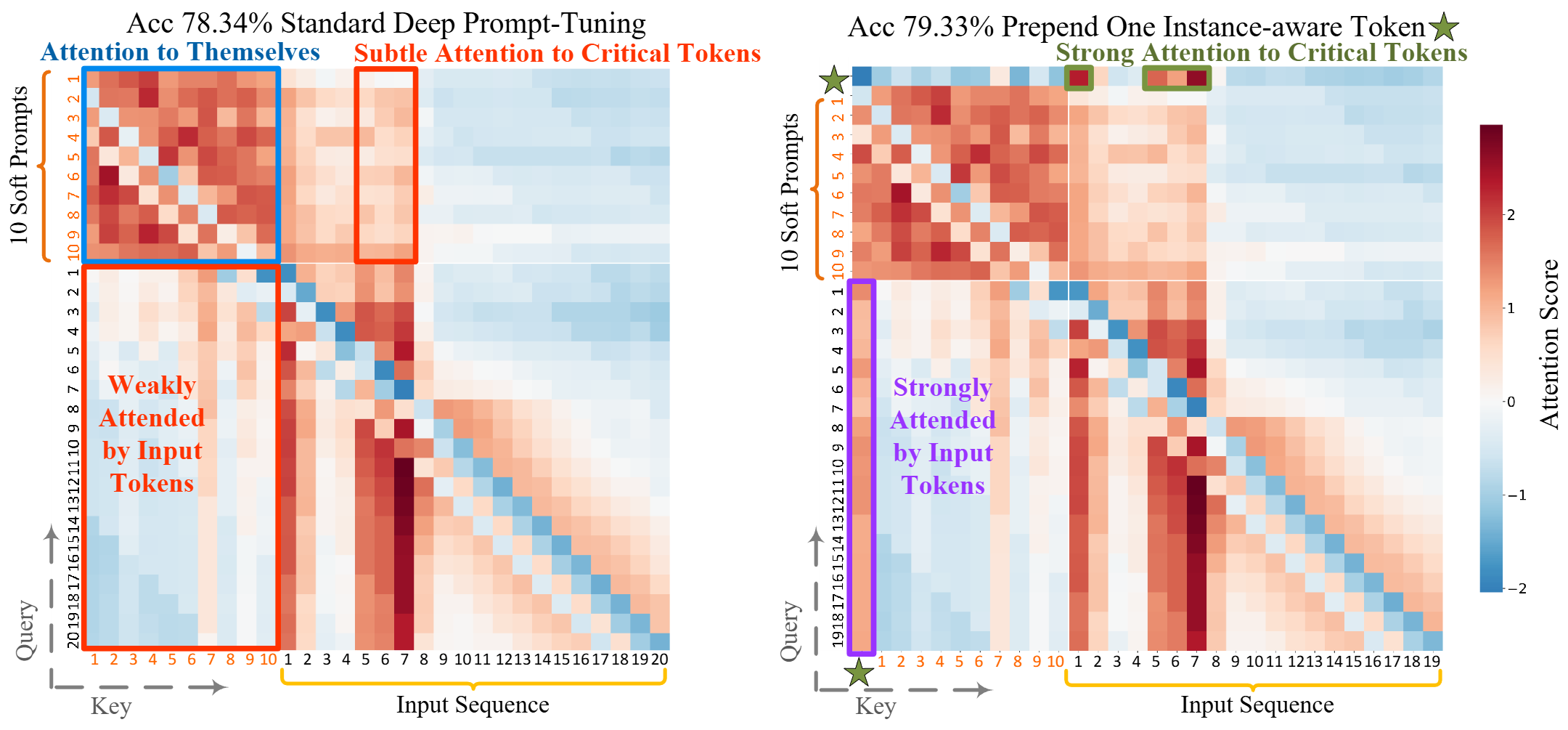

All You Need is One: Capsule Prompt Tuning with a Single Vector. Y. Liu, J. C. Liang, H. Fang, W. Yang, Y. Cui, X. Han, L. Huang, D. Liu, Q. Wang, C. Han. The Conference on Neural Information Processing Systems (NeurIPS), 2025.

-

Prompt-based adaptation in large-scale vision models: A survey. X. Xiao, Y. Zhang, L. Zhao, Y. Liu, X. Liao, Z. Mai, X. Li, X. Wang, H. Xu, J. Hamm, X. Lin, M. Xu, Q. Wang, T. Wang†, C. Han†. Transactions on Machine Learning Research (TMLR), 2026. [pdf][project page]

-

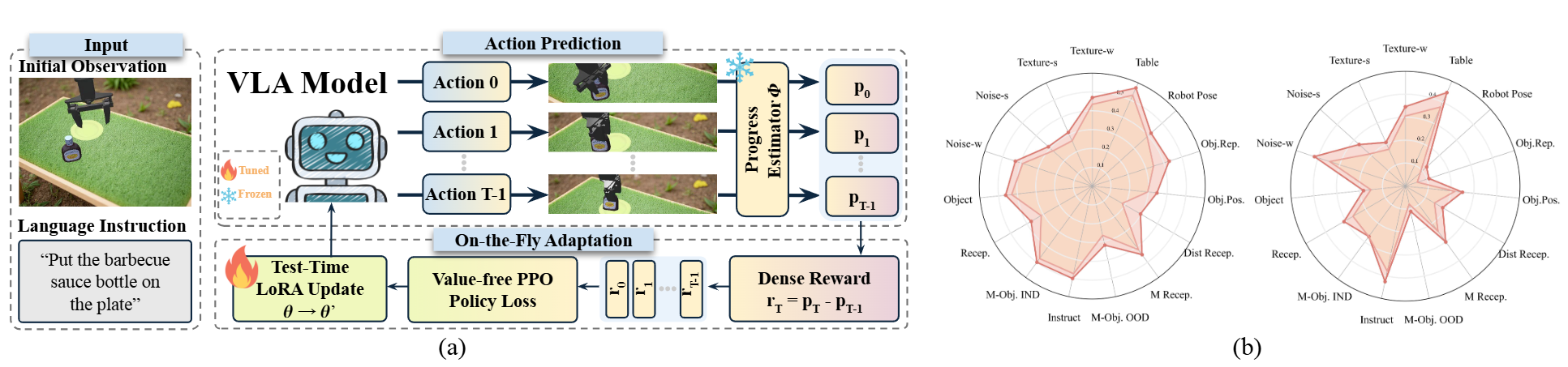

On-the-Fly VLA Adaptation via Test-Time Reinforcement Learning. C. Liu, Y. Liu, T. Wang, Q. Zhuang, J. C. Liang, W. Yang, R. Xu, Q. Wang, D. Liu†, C. Han†. The 64th Annual Meeting of the Association for Computational Linguistics (ACL Main), 2026.